You've heard the promise: AI-powered automation that builds Meta campaigns in seconds, optimizes based on performance data, and scales your advertising without the manual grind. The pitch sounds compelling. But here's the reality most marketers face—you've got maybe 7-14 days to figure out if this technology actually delivers for your business, or if it's just another shiny tool that creates more complexity than it solves.

Starting a free trial of a Meta ads automation platform represents a pivotal opportunity, but most marketers squander it by jumping in without a plan. They click around randomly, test features they don't understand, and emerge from the trial period with no clear answer to the only question that matters: will this save me time while improving results?

The trial period is your chance to validate whether automation can genuinely transform your advertising workflow before committing budget. Yet the typical approach—casually exploring features when you have a spare moment—barely scratches the surface of what these platforms can do.

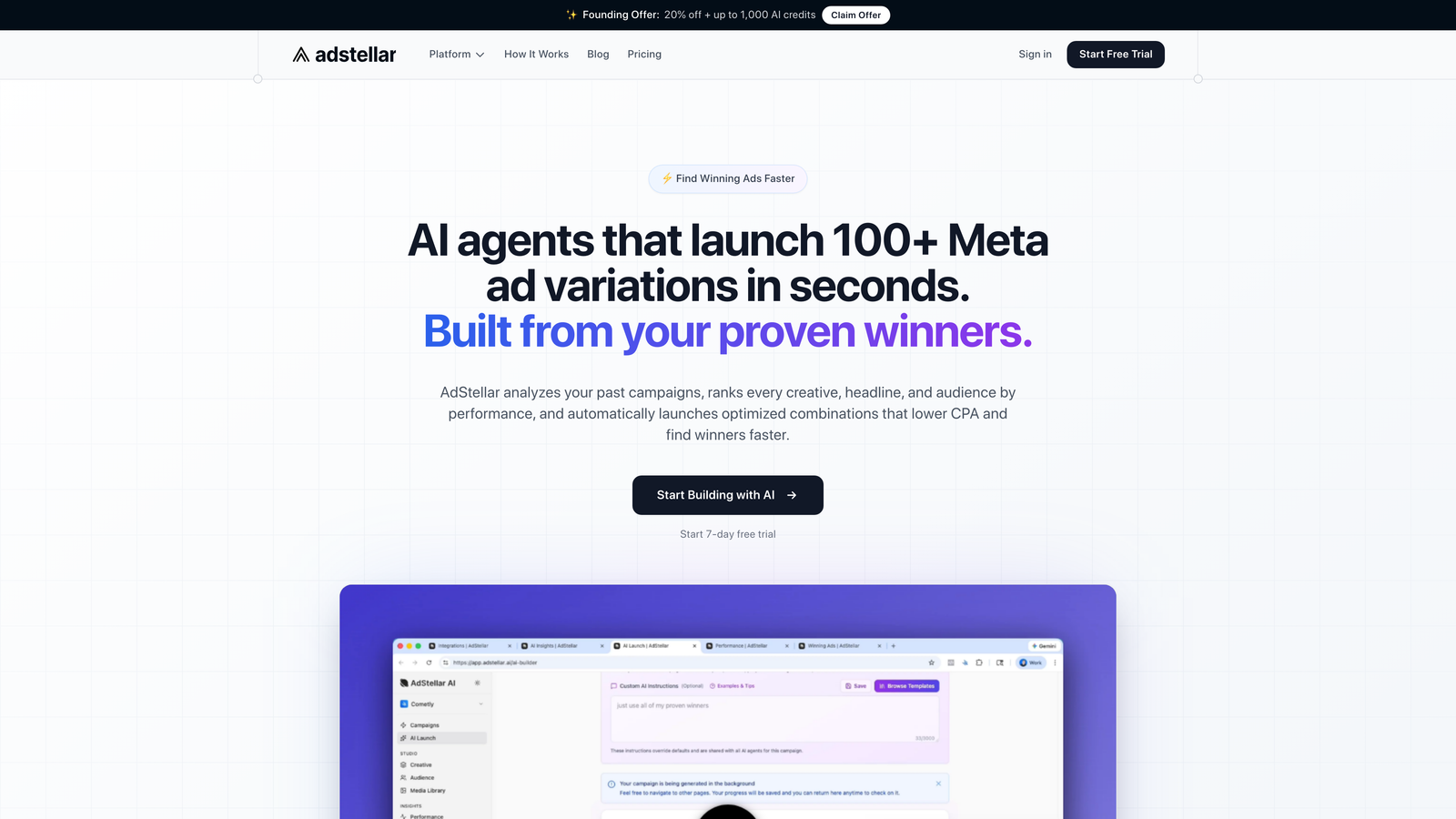

This guide walks you through seven battle-tested strategies to extract maximum value from your Meta ads automation free trial. Whether you're evaluating AdStellar AI or another platform, these approaches will help you make a confident, data-backed decision about whether automation belongs in your marketing stack. Think of your trial as a controlled experiment, not a casual test drive.

1. Audit Your Current Workflow Before Day One

The Challenge It Solves

Without baseline metrics, you're flying blind. Many marketers activate their trial, use the platform for a week, and then struggle to articulate what actually improved. Was campaign creation faster? By how much? Did performance increase, or did you just feel less stressed? The absence of documented "before" data makes it impossible to measure the "after" impact.

This isn't just about having vague impressions—it's about having concrete evidence to justify a purchasing decision to your team or clients.

The Strategy Explained

Before you even sign up for the trial, spend 2-3 hours documenting your current state. Track how long it takes to build a typical campaign from concept to launch. Note how many campaigns you can realistically create per week. Document your current performance metrics: average CTR, CPC, conversion rate, and ROAS across your active campaigns.

This audit creates a measurement framework. When you complete your trial, you'll compare new metrics against these baselines to quantify improvement. The difference between "this felt easier" and "campaign creation time dropped from 45 minutes to 8 minutes" is the difference between a guess and a business case. Understanding meta ads automation vs manual creation helps establish what improvements to expect.

Implementation Steps

1. Time yourself building your next 2-3 campaigns manually, tracking each phase: research, audience setup, creative selection, copy writing, budget allocation, and launch.

2. Document your current weekly campaign output: how many new campaigns, ad sets, and creative variations you typically launch.

3. Pull performance data for your last 30 days of Meta advertising: aggregate CTR, CPC, conversion rate, and ROAS to establish performance benchmarks.

4. Note current pain points in writing: where you get stuck, what takes longest, which decisions feel like guesswork.

Pro Tips

Create a simple spreadsheet with "Before Trial" and "After Trial" columns. This becomes your decision-making document. Focus on metrics that matter to your business—if speed matters more than performance, weight time savings heavily. If you're already fast but need better results, prioritize performance metrics.

2. Prepare Your Historical Performance Data

The Challenge It Solves

AI-powered automation platforms work by analyzing patterns in your existing data. Feed them garbage, and they'll generate garbage. Many marketers start their trial with disorganized creative libraries, inconsistent naming conventions, and no clear record of what actually worked. The AI can't identify your winning formulas if your data doesn't reveal them.

This preparation gap means the automation makes recommendations based on incomplete information—and you'll blame the platform when it's actually a data quality issue.

The Strategy Explained

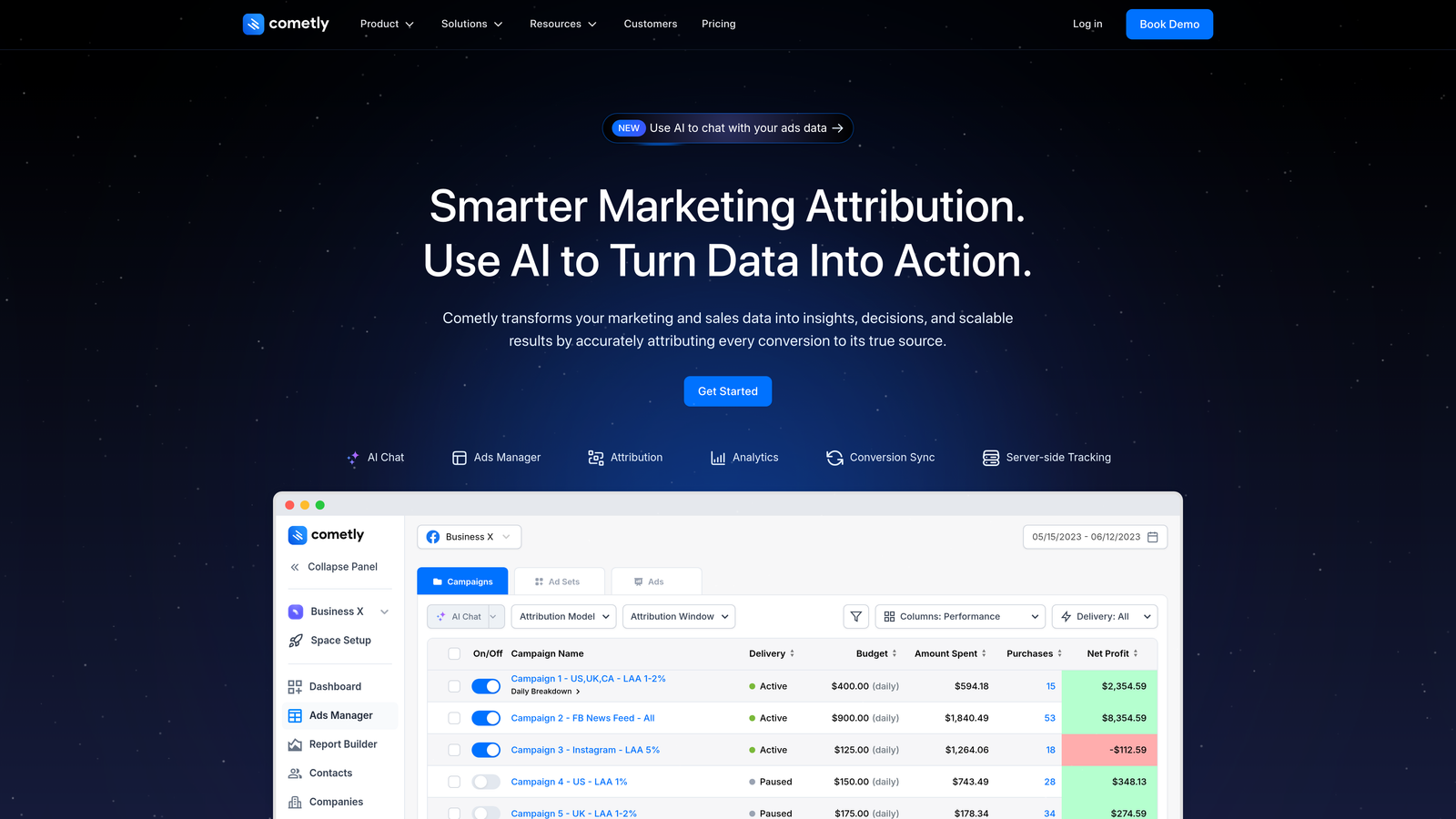

Spend your first trial day organizing, not building. Gather your top-performing creatives from the past 90 days. Identify your highest-converting audiences. Document which headlines, calls-to-action, and ad formats have historically delivered the best results. If you're using attribution tools like Cometly, ensure your tracking is properly configured before you start testing automation.

The goal is to give the AI platform a clear picture of what success looks like in your account. When platforms like AdStellar AI analyze your historical data, they're looking for patterns—which creative elements, targeting approaches, and budget strategies correlate with your best outcomes. Clean, organized data accelerates this learning. For a deeper understanding, explore what is meta ads automation and how it leverages your data.

Implementation Steps

1. Export your top 20 performing ads from Meta Ads Manager, sorted by your primary KPI (conversions, ROAS, or engagement).

2. Create a "Winners Library" folder with your best-performing creatives, organized by campaign objective and format.

3. Document your most successful audience segments with notes on why they performed well and what offers resonated.

4. Verify your conversion tracking is firing correctly and your attribution window settings match your business model.

Pro Tips

If you're evaluating a platform like AdStellar AI that features a Winners Hub for reusing proven ad elements, this preparation work pays double dividends. You're not just organizing for the AI—you're creating a reusable library that streamlines future campaign creation whether you automate or not.

3. Start With Your Proven Winners, Not Experiments

The Challenge It Solves

The temptation during a trial is to test the platform with completely new campaigns—new products, new audiences, experimental creative approaches. This seems logical: you're testing the tool's capabilities, right? Wrong. When you test with unknowns, you can't separate platform performance from campaign performance. A failed campaign might mean the automation doesn't work, or it might just mean your experimental offer was weak.

This approach wastes your limited trial time on campaigns that would have failed manually anyway.

The Strategy Explained

Your first automated campaigns should replicate your proven successes. Take a campaign that's currently working well manually and rebuild it using the automation platform. This creates a controlled comparison: you know what good performance looks like, so you can evaluate whether the AI matches, improves, or underperforms your manual baseline.

If the automation can successfully scale something you've already validated, that's meaningful evidence. If it struggles with your winners, that's a clear warning sign. Either outcome gives you actionable data. Learning how to get started with meta ads automation properly ensures you maximize this validation phase.

Implementation Steps

1. Select your best-performing active campaign as your first automation test case—choose something with at least 30 days of solid performance data.

2. Use the automation platform to rebuild this campaign, paying attention to how the AI interprets your objectives and makes decisions about targeting, creative selection, and budget allocation.

3. Launch the automated version alongside your manual campaign (with appropriate budget splits to avoid audience overlap issues).

4. Compare performance after 5-7 days: does the automated version match or exceed your manual results?

Pro Tips

Look beyond just performance metrics. Evaluate how the AI's decisions align with your strategic thinking. If a platform like AdStellar AI shows you the rationale behind each targeting or creative choice through its AI agents' explanations, assess whether that logic matches your expertise. Transparent AI that you can validate is more valuable than a black box that happens to work.

4. Test Bulk Campaign Creation on Day Two

The Challenge It Solves

Time savings is often the primary selling point of automation platforms, but it's also the hardest to verify without direct testing. Vendors claim you'll build campaigns "10× faster" or "in under 60 seconds," but these numbers are meaningless without experiencing the workflow yourself. Many marketers complete trials without ever stress-testing the bulk creation features that deliver the real efficiency gains.

You end up evaluating automation based on single-campaign builds, which doesn't reflect how you'd actually use the tool at scale.

The Strategy Explained

Once you've validated the platform with your proven winner, immediately test its bulk capabilities. Plan to create 5-10 campaign variations in one session—different audience segments, creative combinations, or budget strategies. This mirrors real-world usage where you're not launching one perfect campaign, but testing multiple approaches to find winners.

Time this process and compare it against how long these same campaigns would take manually. The difference reveals whether the platform delivers genuine efficiency or just shifts complexity around. Reviewing meta ads workflow automation capabilities helps you understand what bulk features to prioritize.

Implementation Steps

1. Plan a batch of 5-10 related campaigns you'd realistically launch together (e.g., the same offer to different audience segments, or multiple creative variations to the same audience).

2. Time yourself using the bulk creation features to build all campaigns in one session, noting any friction points or confusion.

3. Estimate how long the same task would take manually based on your baseline audit from Strategy 1.

4. Calculate the time savings ratio and assess whether the quality of bulk-created campaigns matches your manual standards.

Pro Tips

Pay attention to the setup-to-launch ratio. Some platforms require extensive configuration upfront but then create campaigns rapidly. Others are quick to start but require manual refinement afterward. Neither is wrong—but one might fit your workflow better. If you're testing AdStellar AI's bulk launch capabilities, evaluate whether the 7 specialized agents maintain quality when building at speed.

5. Evaluate the AI's Decision Transparency

The Challenge It Solves

Black box automation is dangerous. When an AI makes targeting decisions, selects creative, or allocates budget without explaining why, you're forced to either trust blindly or second-guess everything. Many marketers abandon automation platforms not because they don't work, but because they can't validate the AI's logic against their own expertise.

This trust gap becomes critical when performance dips and you need to diagnose what went wrong.

The Strategy Explained

During your trial, actively interrogate the platform's decision-making process. When it recommends an audience, does it explain why? When it selects certain creatives over others, can you see the performance data that informed that choice? When it allocates budget across ad sets, does the logic align with your understanding of Meta's auction dynamics?

Transparency isn't just a nice-to-have feature—it's what allows you to learn from the AI, validate its recommendations, and intervene when necessary. The best automation platforms teach you to be a better marketer, not just a button-pusher. Examining meta ads targeting automation features reveals how platforms handle audience selection transparency.

Implementation Steps

1. For each automated campaign you create, document what decisions the AI made: audience selection, creative choices, budget distribution, and campaign structure.

2. Look for explanations or rationale—does the platform show you why it made each choice, or just what it chose?

3. Compare the AI's decisions against what you would have done manually: where do they align, and where do they diverge?

4. Test whether you can override AI recommendations when you disagree—and whether the platform explains the potential impact of your changes.

Pro Tips

Platforms like AdStellar AI that feature specialized agents (Director, Targeting Strategist, Creative Curator, etc.) often provide agent-specific rationale for decisions. This granular transparency lets you evaluate each component of the campaign build separately. If you trust the targeting logic but question the creative selection, you can intervene precisely rather than scrapping the entire automated approach.

6. Run a Head-to-Head Campaign Comparison

The Challenge It Solves

Anecdotal impressions don't hold up in budget meetings. You need quantifiable proof that automation delivers better results, faster execution, or both. Without a direct comparison under controlled conditions, you're comparing apples to oranges—your automated campaigns might be testing different products, audiences, or time periods than your manual ones.

This makes it impossible to isolate the automation variable and measure its true impact.

The Strategy Explained

Design a controlled experiment: create two identical campaigns promoting the same offer, with the only difference being that one is built manually and one is built using automation. Split your budget evenly between them. Launch simultaneously. Let them run for at least 5-7 days to gather meaningful data.

This head-to-head test reveals whether automation genuinely improves performance or just changes your workflow. You'll see differences in setup time, ongoing management requirements, and ultimate results—all the data you need for a confident decision. Reading meta ads automation vs ads manager comparisons provides context for what performance differences to expect.

Implementation Steps

1. Choose a current campaign or upcoming launch that you can split-test without cannibalizing results.

2. Build one version manually using your standard process, documenting time spent and decisions made.

3. Build an identical version using the automation platform, again tracking time and noting how AI decisions differ from your manual choices.

4. Launch both simultaneously with equal budgets and let them run for 5-7 days, then compare: CTR, CPC, conversion rate, ROAS, and total time invested (build + management).

Pro Tips

Don't just compare final performance numbers. Track ongoing management time too. If your automated campaign requires less daily optimization but delivers similar results, that's a win—you've freed up time for strategy. If it matches your manual campaign's performance but took 80% less time to build, that's a different kind of win. Define success based on your actual business priorities.

7. Stress-Test Scalability Before Trial Ends

The Challenge It Solves

Platforms often perform beautifully with 2-3 test campaigns, then buckle under real-world volume. You sign up based on your trial experience, only to discover the tool becomes sluggish, error-prone, or limited when you're managing 50+ active campaigns across multiple clients or product lines. This scalability gap doesn't reveal itself until you're already committed.

By then, you've invested time learning the platform and migrating workflows—switching costs are high.

The Strategy Explained

In your final trial days, deliberately push the platform to your actual usage limits. If you typically manage 30 campaigns simultaneously, create 30 campaigns. If you launch 100+ ad variations per month, test whether the bulk tools maintain quality at that volume. If you need multiple workspace environments for different clients or brands, set those up and evaluate the organizational features.

This stress test reveals whether the platform can handle your real needs, not just your trial-period experimentation. It's better to discover limitations now than after you've committed. For agencies managing multiple accounts, understanding meta ads automation for agencies requirements helps frame your scalability testing.

Implementation Steps

1. Calculate your typical monthly volume: how many campaigns, ad sets, and creative variations you launch in a normal month.

2. Compress that volume into your final 2-3 trial days—create campaigns at scale to simulate real-world usage patterns.

3. Test organizational features: workspace management, campaign naming conventions, filtering and search capabilities, and bulk editing tools.

4. Evaluate performance under load: does the platform slow down, throw errors, or maintain speed and reliability when managing higher volume?

Pro Tips

If you're evaluating a platform that offers unlimited workspaces (like AdStellar AI), test this feature even if you only have one brand currently. Your needs might expand, and knowing the platform can grow with you adds value. Similarly, test any integration features—if the platform connects with attribution tools like Cometly, verify those integrations work smoothly at scale, not just with test data.

Putting It All Together

Your free trial isn't just a product demo—it's a controlled experiment that should answer one question: will this platform save me time while improving results? The difference between a wasted trial and a confident purchasing decision comes down to structure.

By auditing your current workflow before you start, you create measurable baselines. By preparing your historical performance data, you give the AI quality inputs. By testing with proven winners first, you validate accuracy before experimenting. By evaluating bulk creation, decision transparency, head-to-head comparisons, and scalability, you gather comprehensive evidence across all the dimensions that matter.

The most successful trial users treat these days as a structured evaluation, not casual exploration. They emerge with concrete data: "Automated campaigns matched manual performance while reducing build time by 75%" or "The platform struggled with our audience complexity" or "Bulk creation maintained quality at scale." These are decision-making statements, not vague impressions.

Think of your trial period as an investment in clarity. The hours you spend systematically testing will save you from months of buyer's remorse—or worse, missed opportunities because you dismissed a tool that could have transformed your workflow.

Ready to put these strategies into action? Start Free Trial With AdStellar AI and experience how seven specialized AI agents can build complete Meta campaigns in under 60 seconds—giving you the data you need to decide with confidence. The platform's AI Campaign Builder, featuring specialized agents for strategy, targeting, creative curation, and copywriting, provides the transparency and scalability these evaluation strategies require. You'll see exactly how the AI thinks, validate its decisions against your expertise, and stress-test its capabilities with your real-world volume—all within your trial period.